This destination is a CSV (comma-separated values) flat file.

Connection #

Adding a Destination #

- In the main window of the Designer, navigate to Server > Manage Destinations. The window “Manage Destinations” opens.

- Click [Add] to create a new destination. The window “Destination Details” opens.

- Enter a Name for the destination.

- Select the destination Type from the drop-down menu.

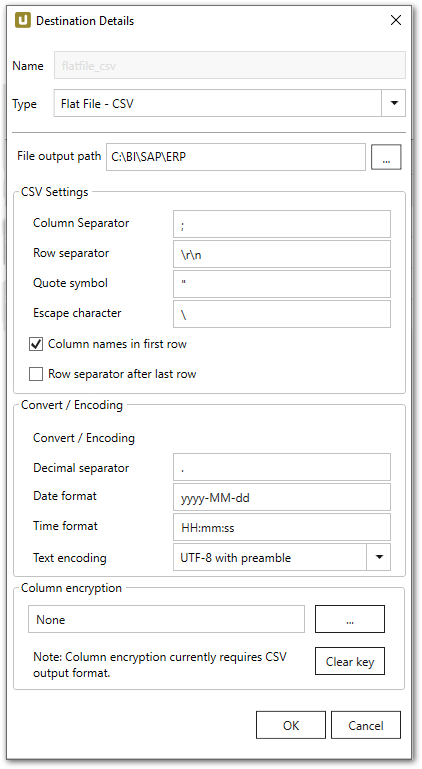

Destination Details #

File output path

Enter the directory to save the destination flat files in. If the entered folder does not exist, a new folder is created.

Note: To write flat files to a network drive, you need to:

- Enter the File output path in UNC format e.g., \\Server2\Share\Folder1.

- Run the Xtract Universal service by a user with write permission to the directory.

CSV Settings #

Column seperator

Defines how two columns in CSV are separated.

Row separator

Defines how two rows in CSV are separated.

Quote symbol

Defines which character is used to encase field data. A sequence of characters may be used as “Quote symbol”.

Quotation is applied in the following scenarios:

- The Column separator is part of the field data.

- The Quote symbol is part of the field data.

- The Row separator is part of the field data.

- The Escape character is part of the field data.

Escape character

When Escape character is part of the field data, the respective field containing this character is encased by the “Quote symbol”.

The default escape character is the backslash ‘\’. The field may remain empty.

Column names in first row

Defines if the first row contains the column names. This option is set per default.

Row separator after last row

Defines if the last row contains a row separator. This option is set per default.

Convert / Encoding #

Decimal separator

Defines the decimal separator of decimal number for the output. Dot (.) is the default value.

Date format

Defines a customized date format (e.g. YYYY-MM-DD or MM/DD/YYYY) for converting valid SAP dates (YYYYMMDD). Default is YYYY-MM-DD.

Time format

Defines a customized time format (e.g. HH-MM-SS or HH:MM:SS) for converting valid SAP times (HHMMSS). Default is HH:MM:SS.

Text Encoding

Defines the text encoding.

Column encryption #

The “Column Encryption” feature enables users to encrypt columns in the extracted data set before uploading them to the destination. By encrypting the columns you can ensure the safety of sensitive information. You can store data in its encrypted form or decrypt it right away.

The feature also supports random access, meaning that the data is decryptable at any starting point. Because random access has a significant overhead, it is not recommended to use column encryption for encrypting the whole data set.

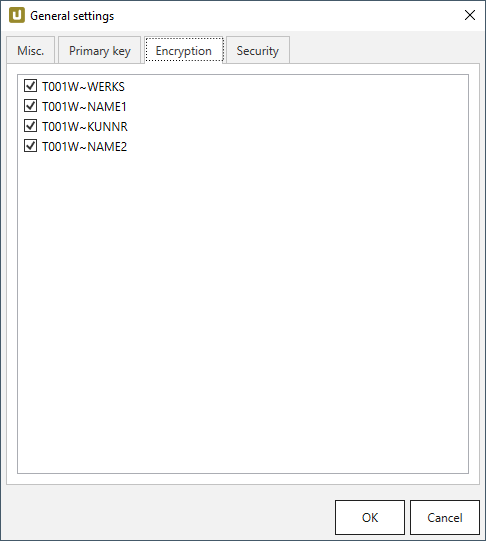

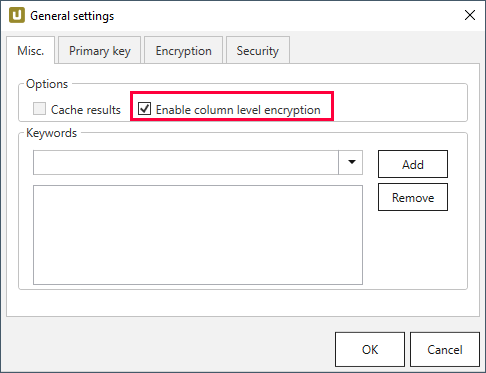

How to proceed

Note: The user must provide an RSA public key.

-

Select the columns to encrypt under Extraction settings > General settings > Encryption.

-

Make sure the Enable column level encryption checkbox is activated under Extraction settings > General settings > Misc..

-

Click […] in Destination Details > Column Encryption to import the public key as an .xml file.

-

Run the extraction.

-

Wait for XtractUniversal to upload the encrypted data and the “metadata.json” file to the destination.

-

Manually or automatically trigger your decryption routine.

Decryption

The decryption depends on the destination environment. Implementation samples for Azure Storage, AWS S3 and local flat file CSV environments are provided at GitHub. Included are the cryptographic aspect, which is open source and also the interface to read the CSV data and “metadata.json” which is not open source.

Technical Information

The encryption is implemented as a hybrid cryptosystem.

This means that a randomized AES session key is created when starting the extraction.

The data is then encrypted via the AES-GCM algorithm with the session key.

The implementation uses the recommended length of 96 bits for the IV.

To guarantee random access, each cell gets its own IV/nonce and Message Authentication Code (MAC).

The MAC is the authenticity token in GCM providing a signature for the data.

In the resulting encrypted data set, the encrypted cells are assembled like this:

IV|ciphertext|MAC

The IV is encoded as 7-Bit integer. The session key is then encrypted with the RSA public key provided by the user. This encrypted session key is uploaded to the destination as a “metadata.json” file, including a list of the encrypted columns and formatting information of the destination.

Settings #

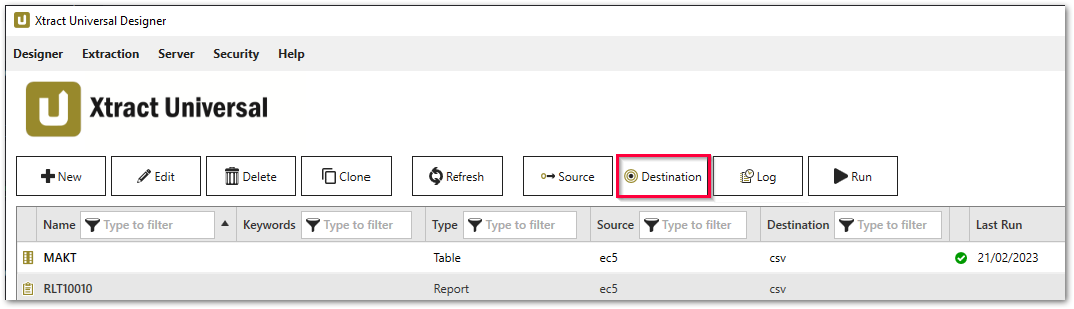

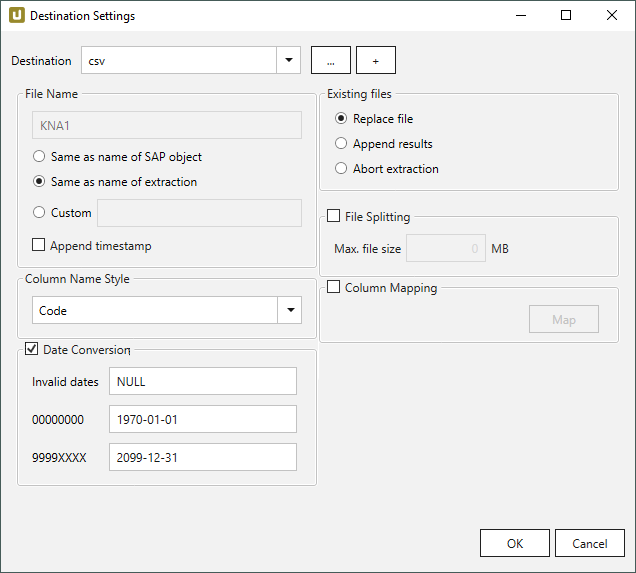

Opening the Destination Settings #

- Create or select an existing extraction, see Getting Started with Xtract Universal.

- Click [Destination]. The window “Destination Settings” opens.

The following settings can be defined for the destination:

Destination Settings #

File Name #

File Name determines the name of the target table. You have the following options:

- Same as name of SAP object: Copy the name of the SAP object

- Same as name of extraction: Adopt name of extraction

- Custom: Define a name of your choice

- Append timestamp: Add the timestamp in the UTC format (_YYYY_MM_DD_hh_mm_ss_fff) to the file name of the extraction

Using Script Expressions as Dynamic File Names

Script expressions can be used to generate a dynamic file name. This allows generating file names that are composed of an extraction’s properties, e.g. extraction name, SAP source object. This scenario supports script expressions based on .NET and the following XU-specific custom script expressions:

| Input | Description |

|---|---|

#{Source.Name}# |

Name of the extraction’s SAP source. |

#{Extraction.ExtractionName}# |

Name of the extraction. If the extraction is part of an extraction group, enter the name of the extraction group before the name of the extraction. Separate groups with a ‘,’, e.g, Tables,KNA1, S4HANA,Tables,KNA1. |

#{Extraction.Type}# |

Extraction type (Table, ODP, BAPI, etc.). |

#{Extraction.SapObjectName}# |

Name of the SAP object the extraction is extracting data from. |

#{Extraction.Timestamp}# |

Timestamp of the extraction. |

#{Extraction.SapObjectName.TrimStart("/".ToCharArray())}# |

Removes the first slash ‘/’ of an SAP object. Example: /BIO/TMATERIAL to BIO/TMATERIAL - prevents creating an empty folder in a file path. |

#{Extraction.SapObjectName.Replace('/', '_')}# |

Replaces all slashes ‘/’ of an SAP object. Example /BIO/TMATERIAL to _BIO_TMATERIAL - prevents splitting the SAP object name by folders in a file path. |

#{Extraction.Context}# |

Only for ODP extractions: returns the context of the ODP object (SAPI, ABAP_CDS, etc). |

#{Extraction.Fields["[NameSelectionFiels]"].Selections[0].Value}# |

Only for ODP extractions: returns the input value of a defined selection / filter. |

#{Odp.UpdateMode}# |

Only for ODP extractions: returns the update mode (Delta, Full, Repeat) of the extraction. |

#{TableExtraction.WhereClause}# |

Only for Table extractions: returns the WHERE clause of the extraction. |

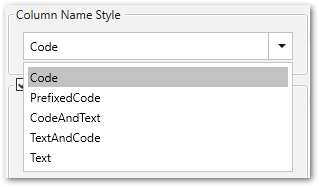

Column Name Style #

Defines the style of the column name. Following options are available:

- Code: The SAP technical column name is used as column name in the destination e.g., MAKTX.

- PrefixedCode: The SAP technical column name is prefixed by SAP object name and the tilde character e.g., MAKT~MAKTX

- CodeAndText: The SAP technical column name and the SAP description separated by an underscore are used as column name in the destination e.g., MAKTX_Material Description (Short Text).

- TextAndCode: The SAP description and the SAP technical column name description separated by an underscore are used as column name in the destination e.g., Material Description (Short Text)_MAKTX.

- Text: The SAP description is used as column name in the destination e.g., Material Description (Short Text).

Date conversion #

Convert date strings

Converts the character-type SAP date (YYYYMMDD, e.g., 19900101) to a special date format (YYYY-MM-DD, e.g., 1990-01-01). Target data uses a real date data-type and not the string data-type to store dates.

Convert invalid dates to

If an SAP date cannot be converted to a valid date format, the invalid date is converted to the entered value. NULL is supported as a value.

When converting the SAP date the two special cases 00000000 and 9999XXXX are checked at first.

Convert 00000000 to

Converts the SAP date 00000000 to the entered value.

Convert 9999XXXX to

Converts the SAP date 9999XXXX to the entered value.

Existing files #

Replace file: The export process overwrites existing files.

Append results: The export process appends new data to an already existing file. See also Column Mapping.

Abort extraction: The process is aborted, if the file already exists.

File Splitting #

File Splitting

Writes extraction data of a single extraction to multiple files.

Each filename is appended by _part[nnn].

Max. file size

The value set in Max. file size determines the maximum size of each file.

Note: The option Max. file size does not apply to gzip files. The size of a gzipped file cannot be determined in advance.

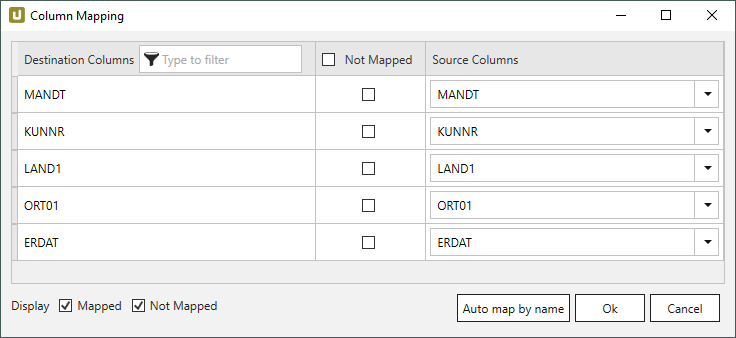

Column Mapping #

Activate Column Mapping when appending data to an existing file or entity that has different column names or a different number of columns.

This can be the case when extracting data from two or more extractions into the same destination file, where the column names of the extraction and the destination file differ.

Note: The column names in the extraction and destination must be unique. If duplicated column names are found, an error message is displayed. The column names must be corrected, before column mapping can be used.

- When working with flat files, ensure that:

a) the XU server and the Designer both have access to the destination file.

b) the output directory and the file name of the extraction match the destination file.

c) the Column Name Style of the extraction and destination file match. - Select the option Append results in the section Existing Files.

- Click [Map] to assign columns. The window “Column Mapping” opens.

Destination Columns displays the names of the columns that are available in the destination file or entity. Not Mapped defines whether or not columns are mapped to the destination columns. Source Columns defines which SAP column is mapped to a destination column.

- a) If the column names of the extraction and the names of the destination columns match, click [Auto map by name].

b) If the column names do not match, assign columns manually by selecting the respective SAP column from the dropdown menu under Source Columns.

c) If a column does not have a counterpart or is not supposed to be appended, activate the checkbox under Not Mapped. - Click [OK] to confirm your input.

When running the extraction the extracted data is added to the destination file or entity as specified in the column mapping.

Tip: In case an error message pops up, click [Show more] to see a description of what caused the error.