The following section describes data extraction to the Amazon S3 cloud storage.

For more information on Amazon S3, see Getting Started with Amazon S3.

Tip: You can install Xtract Universal in an Amazon Elastic Compute (EC2) instance. Check Amazon EC2: Getting Started with Amazon EC2 to deploy an instance where you can install Xtract Universal in your AWS Account. Make sure the instance is deployed in the same region as your SAP solution to reduce latency and optimize performance.

Requirements #

- Existing Amazon Web Services (AWS) Account.

- Either the “Access Keys” (consisting of “access key ID” and “secret access key”) of your AWS user.

Or an IAM role attached to the EC2 instance Xtract Universal is running on, see Amazon Documentation: Using an IAM role to grant permissions to applications running on Amazon EC2 instances. For more information on the IAM role, see Amazon Documentation: Security best practices in IAM. - Existing S3 bucket, in which you can upload data.

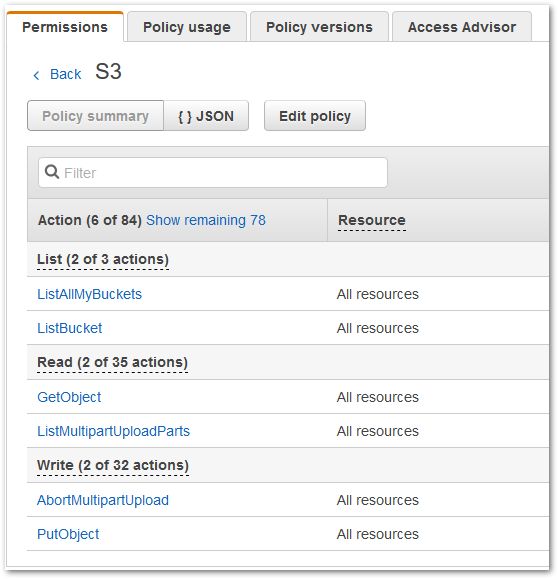

- Sufficient permissions for list, read and write activities on S3. You must grant these rights in the user policy, but you can limit them to certain buckets.

In the following example, the set permissions have been tested in a test environment:

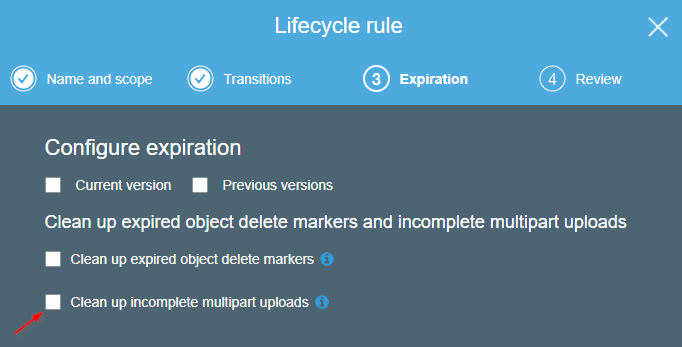

Note: Xtract Universal uses so called Multipart upload for uploading data to S3. Data extracted from SAP is uploaded to S3 not as one big chunk of data but in smaller parts. These parts are buffered on the S3 side. If the extraction is successful, those parts are assembled by S3 into one file. While the extraction is still running this file is not visible on S3.

Recommendation: It’s recommended you enable S3 versioning or perform data backups regularly, see Amazon AWS: Getting Started - Backup & Restore with AWS.

Extraction failed #

In case the extraction fails, for example because of an exception in the Xtract Universal software, Xtract Universal will take care that the already uploaded parts will be deleted from S3. In case of an “uncontrolled” extraction failure, for example due to network issues, Xtract Universal won’t be able to delete those uploaded parts from S3.

We would therefore recommend to change the settings on S3 in a way that will trigger the automatic deletion of unused multiparts, e.g. after a day. You will find this setting by selecting a bucket and opening the “Management” tab. Select “Lifecycle” and “Add lifecycle rule” and create a rule for deleting unused multiparts.

Connection #

Adding a Destination #

- In the main window of the Designer, navigate to Server > Manage Destinations. The window “Manage Destinations” opens.

- Click [Add] to create a new destination. The window “Destination Details” opens.

- Enter a Name for the destination.

- Select the destination Type from the drop-down menu.

Destination Details #

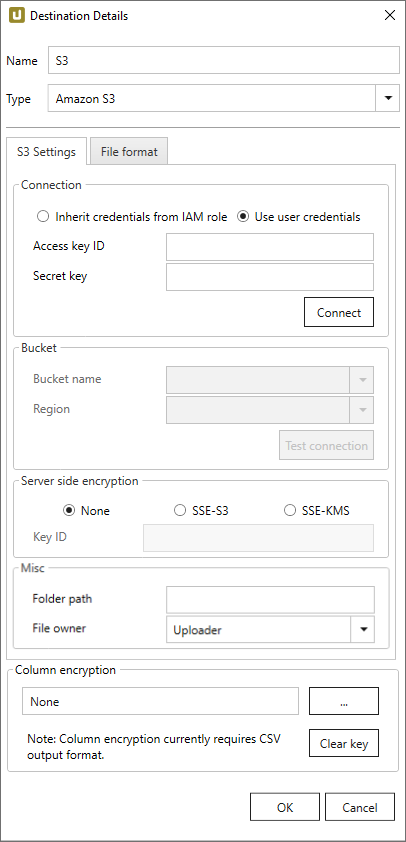

S3 Settings #

Connection

Inherit Credentials from IAM role

The credentials and permissions of the IAM role assigned to the EC2 instance, on which Xtract Universal is running will be used for authentication.

For more information on the IAM role, see Amazon Documentation: Security best practices in IAM.

Access key ID and Secret key

Preferable authentication method towards Amazon AWS. Determine the values via AWS Identity and Access Management (IAM).

More information is available in the official AWS documentation.

Connect

After entering Access key ID and Secret key, click [Connect]. After successfully connecting, select bucket name and region.

Bucket

Bucket name and Region

Select a bucket and a region of the bucket’s location. The SAP data is extracted into the selected bucket.

Note: The drop-down menus list all available buckets and regions, make sure to select the correct combination of bucket & region. Validate the connectivity to the selected bucket by clicking [Test Connection).

Test Connection

Validates the right combination of bucket and region. Insures bucket’s accessibility from Xtract Universal using the entered access keys.

Server-side encryption

Choose how to encrypt data after uploading them to S3.

Note: The setting “Server-side encryption” does not relate to transport encryption between Xtract Universal and S3. By default, the channel for sending data to S3 is always encrypted.

-

None

Server-sided encryption of data not active. -

SSE-S3

Encrypts data using the by default available S3 user account encryption key (S3 Managed Encryption Keys). -

SSE-KMS und Key ID

Encryption using a custom encryption key created on AWS (AWS Key Management Services). The key can be created on the AWS website

Misc

All settings in Misc are optional.

Folder Path

Enter the directory to upload files into.

File Owner

If you upload files as an AWS user of an Account A to an Account B, you can select the option “Bucket Owner”.

Without a declared owner, uploaded files cannot be opened directly.

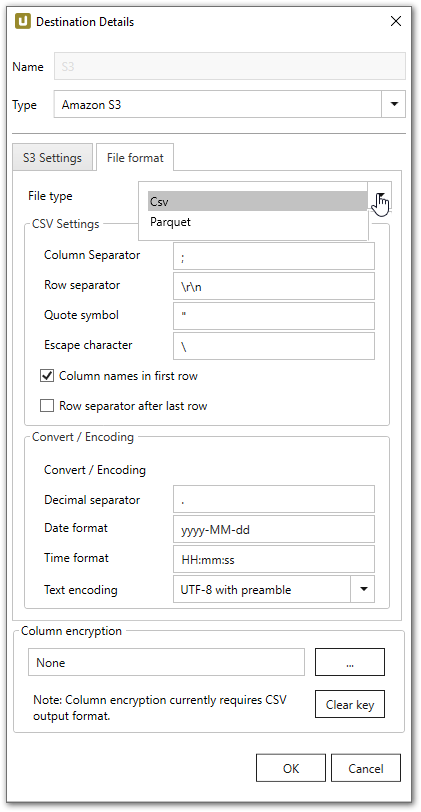

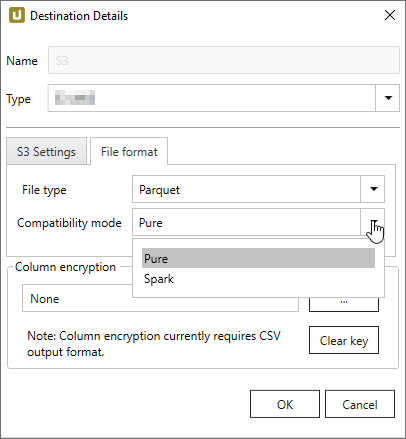

File Format #

File type

Select the required file format. You can choose between Parquet and CSV.

CVS Settings

The settings for file type CSV correspond to the Flat File CSV settings.

Parquet Settings

The following compatibility modes are available:

- Pure

- Spark

- BigQuery

Spark does not support the data types used in pure mode, so other data types need to be used.

In spark mode special characters and spaces are replaced with an underscore _.

| SAP | Pure / BigQuery | Spark |

|---|---|---|

| INT1 | UINT_8 | INT16 |

| TIMS | TIME_MILLIS | UTF8 |

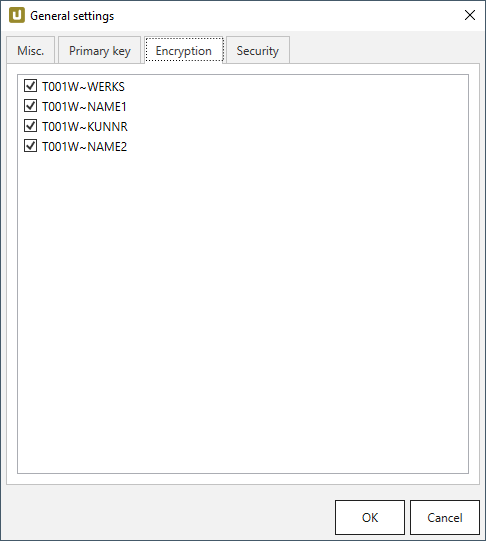

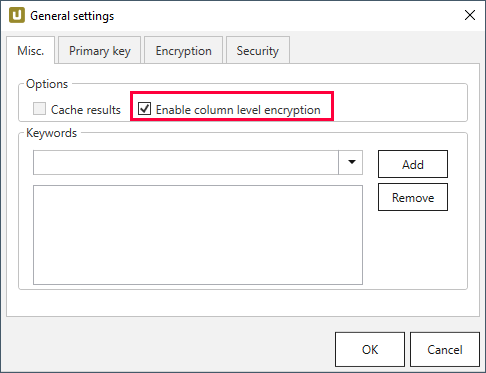

Column encryption #

The “Column Encryption” feature enables users to encrypt columns in the extracted data set before uploading them to the destination. By encrypting the columns you can ensure the safety of sensitive information. You can store data in its encrypted form or decrypt it right away.

The feature also supports random access, meaning that the data is decryptable at any starting point. Because random access has a significant overhead, it is not recommended to use column encryption for encrypting the whole data set.

How to proceed

Note: The user must provide an RSA public key.

-

Select the columns to encrypt under Extraction settings > General settings > Encryption.

-

Make sure the Enable column level encryption checkbox is activated under Extraction settings > General settings > Misc..

-

Click […] in Destination Details > Column Encryption to import the public key as an .xml file.

-

Run the extraction.

-

Wait for XtractUniversal to upload the encrypted data and the “metadata.json” file to the destination.

-

Manually or automatically trigger your decryption routine.

Decryption

The decryption depends on the destination environment. Implementation samples for Azure Storage, AWS S3 and local flat file CSV environments are provided at GitHub. Included are the cryptographic aspect, which is open source and also the interface to read the CSV data and “metadata.json” which is not open source.

Technical Information

The encryption is implemented as a hybrid cryptosystem.

This means that a randomized AES session key is created when starting the extraction.

The data is then encrypted via the AES-GCM algorithm with the session key.

The implementation uses the recommended length of 96 bits for the IV.

To guarantee random access, each cell gets its own IV/nonce and Message Authentication Code (MAC).

The MAC is the authenticity token in GCM providing a signature for the data.

In the resulting encrypted data set, the encrypted cells are assembled like this:

IV|ciphertext|MAC

The IV is encoded as 7-Bit integer. The session key is then encrypted with the RSA public key provided by the user. This encrypted session key is uploaded to the destination as a “metadata.json” file, including a list of the encrypted columns and formatting information of the destination.

Connection Retry #

Connection retry is a built-in function of the AWS S3 destination. The retry function is automatically activated.

Connection retry is a functionality that prevents extractions from failing in case of transient connection interruptions to AWS S3. For more general information about retry strategies in an AWS S3 environment go to the official AWS Help.

Xtract Universal follows an exponential retry strategy. The selected exponential strategy results in 7 retry attempts and an overall timespan of 140 seconds. If a connection is not established during the timespan of 140 seconds, the extraction fails.

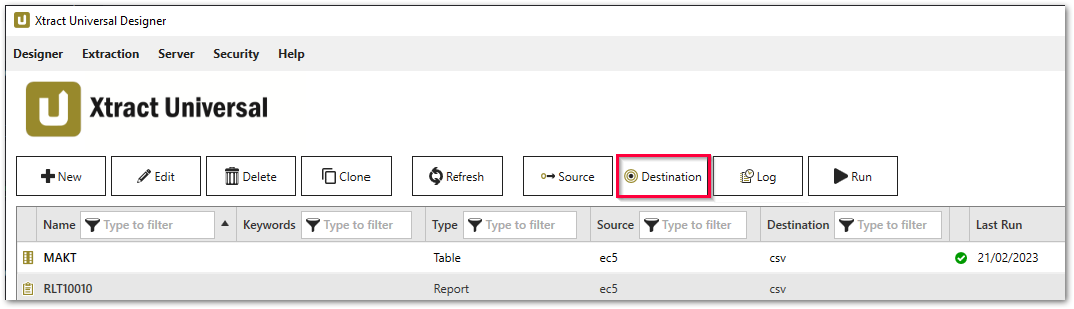

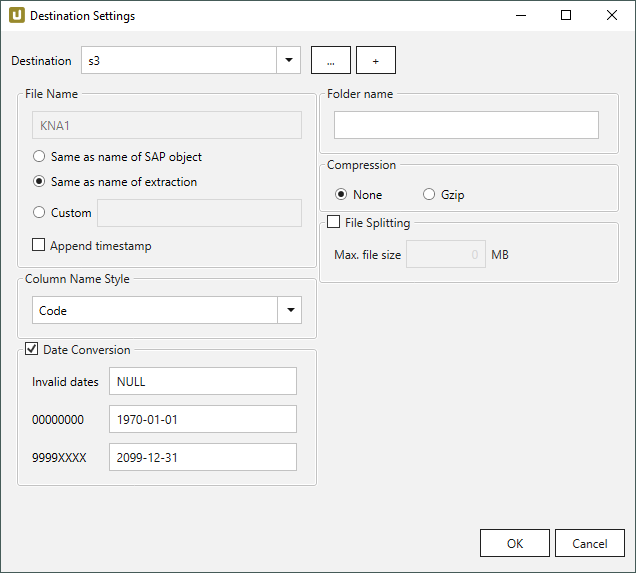

Settings #

Opening the Destination Settings #

- Create or select an existing extraction, see Getting Started with Xtract Universal.

- Click [Destination]. The window “Destination Settings” opens.

The following settings can be defined for the destination:

Destination Settings #

File Name #

File Name determines the name of the target table. You have the following options:

- Same as name of SAP object: Copy the name of the SAP object

- Same as name of extraction: Adopt name of extraction

- Custom: Define a name of your choice

- Append timestamp: Add the timestamp in the UTC format (_YYYY_MM_DD_hh_mm_ss_fff) to the file name of the extraction

Note: If the name of an object does not begin with a letter, it will be prefixed with an ‘x’, e.g. an object by the name _namespace_tabname.csv will be renamed x_namespace_tabname.csv when uploaded to the destination.

This is to ensure that all uploaded objects are compatible with Azure Data Factory, Hadoop and Spark, which require object names to begin with a letter or give special meaning to objects whose names start with certain non-alphabetic characters.

Using Script Expressions as Dynamic File Names

Script expressions can be used to generate a dynamic file name. This allows generating file names that are composed of an extraction’s properties, e.g. extraction name, SAP source object. This scenario supports script expressions based on .NET and the following XU-specific custom script expressions:

| Input | Description |

|---|---|

#{Source.Name}# |

Name of the extraction’s SAP source. |

#{Extraction.ExtractionName}# |

Name of the extraction. If the extraction is part of an extraction group, enter the name of the extraction group before the name of the extraction. Separate groups with a ‘,’, e.g, Tables,KNA1, S4HANA,Tables,KNA1. |

#{Extraction.Type}# |

Extraction type (Table, ODP, BAPI, etc.). |

#{Extraction.SapObjectName}# |

Name of the SAP object the extraction is extracting data from. |

#{Extraction.Timestamp}# |

Timestamp of the extraction. |

#{Extraction.SapObjectName.TrimStart("/".ToCharArray())}# |

Removes the first slash ‘/’ of an SAP object. Example: /BIO/TMATERIAL to BIO/TMATERIAL - prevents creating an empty folder in a file path. |

#{Extraction.SapObjectName.Replace('/', '_')}# |

Replaces all slashes ‘/’ of an SAP object. Example /BIO/TMATERIAL to _BIO_TMATERIAL - prevents splitting the SAP object name by folders in a file path. |

#{Extraction.Context}# |

Only for ODP extractions: returns the context of the ODP object (SAPI, ABAP_CDS, etc). |

#{Extraction.Fields["[NameSelectionFiels]"].Selections[0].Value}# |

Only for ODP extractions: returns the input value of a defined selection / filter. |

#{Odp.UpdateMode}# |

Only for ODP extractions: returns the update mode (Delta, Full, Repeat) of the extraction. |

#{TableExtraction.WhereClause}# |

Only for Table extractions: returns the WHERE clause of the extraction. |

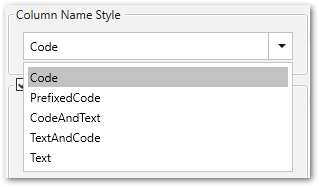

Column Name Style #

Defines the style of the column name. Following options are available:

- Code: The SAP technical column name is used as column name in the destination e.g., MAKTX.

- PrefixedCode: The SAP technical column name is prefixed by SAP object name and the tilde character e.g., MAKT~MAKTX

- CodeAndText: The SAP technical column name and the SAP description separated by an underscore are used as column name in the destination e.g., MAKTX_Material Description (Short Text).

- TextAndCode: The SAP description and the SAP technical column name description separated by an underscore are used as column name in the destination e.g., Material Description (Short Text)_MAKTX.

- Text: The SAP description is used as column name in the destination e.g., Material Description (Short Text).

Date conversion #

Convert date strings

Converts the character-type SAP date (YYYYMMDD, e.g., 19900101) to a special date format (YYYY-MM-DD, e.g., 1990-01-01). Target data uses a real date data-type and not the string data-type to store dates.

Convert invalid dates to

If an SAP date cannot be converted to a valid date format, the invalid date is converted to the entered value. NULL is supported as a value.

When converting the SAP date the two special cases 00000000 and 9999XXXX are checked at first.

Convert 00000000 to

Converts the SAP date 00000000 to the entered value.

Convert 9999XXXX to

Converts the SAP date 9999XXXX to the entered value.

Folder Name #

Enter a folder name without slashes here if you want the extraction to be extracted to a folder within an S3 bucket.

Subfolders are also supported and can be entered as follows: Folder/Subfolder1/Subfolder2/

Using Script Expressions as Dynamic Folder Paths

Script expressions can be used to generate a dynamic folder path. This allows generating folder paths that are composed of an extraction’s properties, e.g., extraction name, SAP source object. The described scenario supports script expressions based on .NET and the following XU-specific custom script expressions:

| Input | Description |

|---|---|

#{Source.Name}# |

Name of the extraction’s SAP source. |

#{Extraction.ExtractionName}# |

Name of the extraction. If the extraction is part of an extraction group, enter the name of the extraction group before the name of the extraction. Separate groups with a ‘,’, e.g, Tables,KNA1, S4HANA,Tables,KNA1. |

#{Extraction.Type}# |

Extraction type (Table, ODP, BAPI, etc.). |

#{Extraction.SapObjectName}# |

Name of the SAP object the extraction is extracting data from. |

#{Extraction.Timestamp}# |

Timestamp of the extraction. |

#{Extraction.SapObjectName.TrimStart("/".ToCharArray())}# |

Removes the first slash ‘/’ of an SAP object. Example: /BIO/TMATERIAL to BIO/TMATERIAL - prevents creating an empty folder in a file path. |

#{Extraction.SapObjectName.Replace('/', '_')}# |

Replaces all slashes ‘/’ of an SAP object. Example: /BIO/TMATERIAL to _BIO_TMATERIAL - prevents splitting the SAP object name by folders in a file path. |

#{Extraction.Context}# |

Only for ODP extractions: returns the context of the ODP object (SAPI, ABAP_CDS, etc). |

#{Extraction.Fields["[NameSelectionFields]"].Selections[0].Value}# |

Only for ODP extractions: returns the input value of a defined selection / filter. |

#{Odp.UpdateMode}# |

Only for ODP extractions: returns the update mode (Delta, Full, Repeat) of the extraction. |

#{TableExtraction.WhereClause}# |

Only for Table extractions: returns the WHERE clause of the extraction. |

#{Extraction.Fields["[0D_NW_CODE]"].Selections[0].Value}# |

Only for BWCube extractions (MDX mode): returns the input value of a defined selection. |

#{Extraction.Fields["[0D_NW_CHANN]"].RangeSelections[0].LowerValue}# |

Only for BWCube extractions (MDX mode): returns the lower input value of a defined selection range. |

#{Extraction.Fields["[0D_NW_CHANN]"].RangeSelections[0].UpperValue}# |

Only for BWCube extractions (MDX mode): returns the upper input value of a defined selection range. |

#{Extraction.Fields["0D_NW_CODE"].Selections[0].Value}# |

Only for BWCube extractions (BICS mode): returns the input value of a defined selection. |

#{Extraction.Fields["0D_NW_CHANN"].RangeSelections[0].LowerValue}# |

Only for BWCube extractions (BICS mode): returns the lower input value of a defined selection range. |

#{Extraction.Fields["0D_NW_CHANN"].RangeSelections[0].UpperValue}# |

Only for BWCube extractions (BICS mode): returns the upper input value of a defined selection range. |

Compression #

None

The data is transferred uncompressed and stored as a csv file.

gzip

The data is transferred compressed and stored as a gz file.

Note: Compression is only available for the csv file format, see Connection: File Format.

File Splitting #

File Splitting

Writes extraction data of a single extraction to multiple files in the cloud.

Each filename is appended by _part[nnn].

Max. file size

The value set in Max. file size determines the maximum size of each stored file.

Note: The option Max. file size does not apply to gzip files. The size of a gzipped file cannot be determined in advance.

Related Links #

- Knowledge Base Article: Run Xtract Universal in a VM on AWS EC2

- Amazon AWS: Getting Started - Backup & Restore with AWS

- Amazon S3: Getting Started with Amazon S3

- Amazon EC2: Getting Started with Amazon EC2

- Amazon Documentation: Amazon EC2 security groups for Linux instances

- Amazon Documentation: Security best practices in IAM

- Amazon Documentation: Overview of managing access